Colleagues who have worked with me in the last ten years know I have some religion about appliances. To a large degree, I believe that most enterprise software that is installed in a customer’s data center should be packaged as an appliance. The embodiment of this could be as a physical appliance or as a virtual software appliance, but either way, it’s an appliance.

My sense is that appliances, especially virtual appliances, are picking up momentum. We’re seeing more and more software companies offering virtual appliances on the market today and more products that could be packaged as software instead packaged as a hardware offering.

There’s a problem though.

Most of these appliances are crap.

What is an Appliance?

It would be cliché to turn to Merriam Webster for a definition, so let’s consider what the Oxford American Dictionary says an appliance is:

ap-pli-ance n. 1 a device or piece of equipment designed to perform a specific task

So if we’re building an enterprise IT appliance, it’s going to be a virtual machine or a physical machine to perform a specific task.

In my mind I’ve always given Dave Hitz, co-founder of NetApp, credit for defining an appliance, but in this interview [PDF], he gives credit to the model Cisco established:

Hitz: […] We were modeling ourselves after Cisco. If you look before Cisco, people used general purpose computers, UNIX workstations, Sun workstations for their routers. Cisco came along and built a box that was just for routing. It did less than a general purpose computer and so the conversation we kept having with the VCs, they would say, “So your box does more than a general purpose computer so it’s better?” And we’d say, “No, no, it does less.” And they’d go, “Well, why would I pay you money to start a company that does less?” And we said, “No, no, it’s focused. It’ll do it better.”

He continues:

The other analogy that we used was a toaster. If you want to make toast, you could go put in on your burner, you can put it in the oven and it’s certainly the case an oven’s a lot more of a general purpose tool than a toaster. A toaster? What good’s a toaster? All it does is make toast. But, man, if you want toast, a toaster really does a good job, you know? Try making toast in your oven and you’ll discover how awesome a toaster is. And that really, for storage over the network, it was that idea of making a device to do just that one thing, focused. Not run programs, not do spreadsheets, not be a tool for programmers, just store the data.

I’ve always stuck by the toaster model when thinking about appliances for customers.

So what does this mean for the user?

Let’s talk about product requirements.

When a vendor ships an appliance to the customer, everything is included: The OS, the management tools, and the application. The beauty of this approach is that the customer no longer has to install software. The customer is no longer responsible for dealing with OS patching and ensuring the right OS patches are installed that match with the requirements of the application.

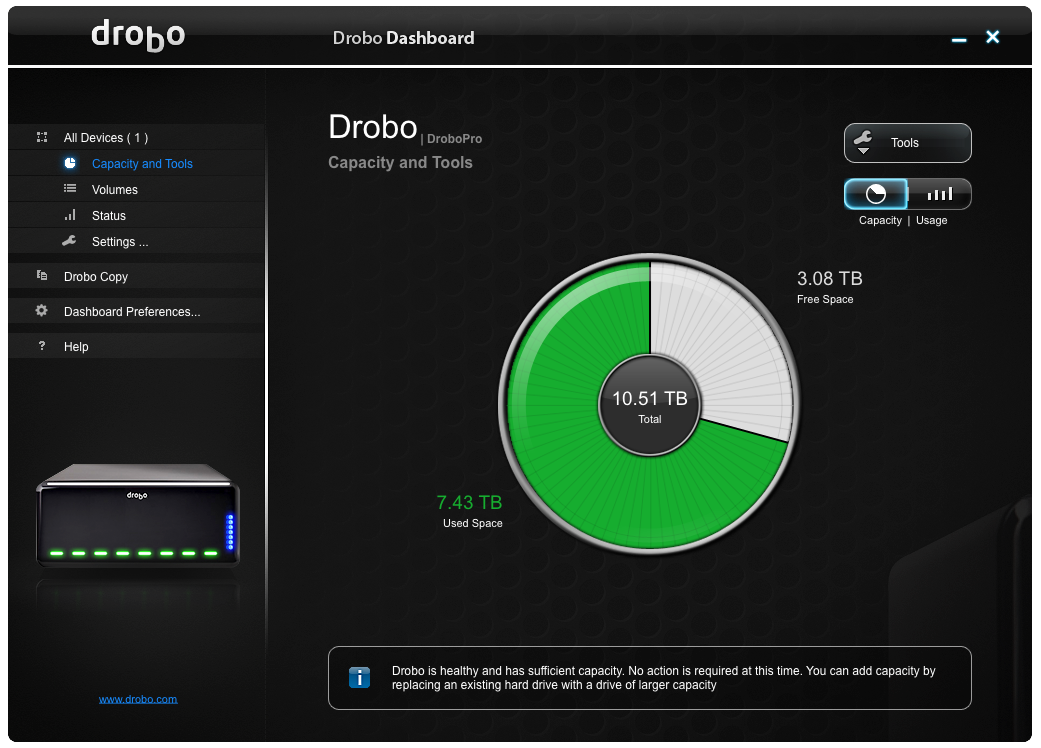

The user interface should be stripped down and minimal. The user should never see a general purpose bash shell prompt. The appliance should be as self-contained as reasonable. Configuration should be as minimal as possible. IP address and related information, LDAP or user set-up, NTP and timezone, email and SNMP, application-specific coordinates, and that’s it! This can be implemented in a small X11 set-up GUI (Nasuni does an excellent job here) or a simple ncurses-based text wizard (similar to what ESXi has).

The user should never be told to edit files off in /etc or manually tweak key-value pairs to get the basic application up and running. Some application programmers get off on connecting to a customer-provided database. In a particularly large installation, this might make sense. To store 500 rows of data, it does not.

An appliance is likely Linux-based these days, as it should be. Software updates should come from an update server provided by the vendor. From the user’s perspective, there is no apt-get or yum details to worry about; it’s a simple matter of clicking a ‘Upgrade’ button. Anyone shipping a Windows-based appliance should only do so for very specific reasons (e.g., need access to Windows APIs).

The GUI for the appliance should be web-based. These days, it needs to be accessible from a variety of browsers. Product managers need to understand the average IT guy has more things to worry about than a single product, and hints need to be provided on how to find the management UI for the appliance. I love how HP handles this on their switches and I adopted their model at my last company: Every login console tty included the IP address and how to find the GUI; both shell and GUI login screens included the default account name and password, right there. The last time you installed a home router, did you have to fire up Google and search for the default user name and password? Or did you read the documentation? Did you read the documentation for Tetris? Why should the default user name and password be harder than playing Tetris?

DHCP should be the default on a first boot, and active on all interfaces with a link. Anyone shipping an appliance without DHCP in this day and age needs to get with the times.

Any CLI should be succinct and hierarchical. Enterprise IT gets tricky, no matter how we simplify it, and users need a way to drill into the available commands. The CLI needs to balance “learning” with speed of use. The reality is, most IT folks are using a lot of different products throughout the day. Make yours the one that is easiest for the admin to jog his mind on how to see replication status (or whatever).

When it comes to lights-out management, product managers and engineers must understand that the product is not the center of the end-user’s universe. Problems and reports should be available via email. With a generated email, the subject line is critical and should be succinct but descriptive. “2 alerts (product name)” with a From: field of the hostname is a good start at a subject line. Failures and recovery from failures should be as automatic as possible. Customers should never have to login to click something in a GUI that the product could have run itself to recover from an error.

Although not strictly appliance-related, let’s also talk about sockets and ports of the TCP/IP variety. When it comes to networking, products should be designed with WANs, VLANs, routers, closed ports, and different views of networks when communicating with other instances of the same product. Don’t rely on ICMP pings being available. Don’t rely on your connection target matching the listening target coordinates. Have flexibility. Design your network protocols for the simplest firewall configurations. In a spoke/hub topology, all the spokes should reach into the hub–that is, all spokes open sockets on a port the hub is listening on, even if the logical client/server is the other direction.

Shipping a Virtual Appliance

This is where I see the most sin in the market today. So many folks install Ubuntu, install their software, shutdown the VM, zip it up, ftp it over to their web site and call it a “virtual appliance”. This is a VMDK full of crap. /root/.bash_history is still populated, as are DHCP cache files, log files, and other things that should be scrubbed. There is no OVF installer file. Old snapshots of the VM are included. What kind of embarrassing stuff is in there?

Let me state up front that OVF is one of the dumbest things in the VMware world. There is no need for it to exist; everything that it does could have been accomplished much more simply. But VMware doesn’t like to make things easy, so we’re stuck with it. On the bright side, the user experience of installing an OVF appliance into a VMware environment is awesome. Thus, vendors need to use it.

From a mindset perspective, the vendor needs to get into a mental mode of a manufacturing process to scrub the VM prior to OVF packaging. Things need to be set to “factory defaults”.

With both physical and virtual appliances, the size of Linux distribution should be minimal. This is more important with the virtual appliance distribution, since customers will be downloading it. As a vendor, you can pick a few approaches on how you do this, but in general, reduce the number of packages you’re including to the bare minimum. You need to tune your CPUs and RAM to the minimum required for your application and let customers tune up from there. If you’re shipping both virtual and physical appliances, you need to understand these are different beasts. How will you sell them? My bet is that you’re pushing virtual appliances into remote-office/branch-office use cases, so size the requirements for the VM appropriately. Size your VMDKs down to something reasonable or use thin-provisioning. Note that OVFs with thin-provisioning will not work on ESX versions less than 4.1 or 4.5 (I can’t remember exactly which versions are broken for thin-provisioning with OVF).

Shipping a Physical Appliance

Ten years ago, I worked on my first appliance product. One of the problems we had with this physical appliance when we went out in the field was that we needed a screwdriver to get it in the rack. Customers sometimes had to spend 10 minutes searching for tools if they were installing it themselves. We started to include a multi-tip screwdriver with the product in the shipping carton. In 2010, my new company had the opportunity to buy a few systems from the previous company. The screwdriver was still included. If I ever do a physical appliance again, I will include the screwdriver. What’s $10 on a $50,000 system anyway? Don’t forget this is a branding opportunity–put your logo or URL on the handle.

If your system is heavy, ship it on a pallet. If you’re on the border (70-80 lbs), pay attention to the condition that the system packaging is in when it arrives at customer sites and adjust accordingly.

Pick rails that don’t suck and work in 2-post and 4-post racks.

Use fans that are quiet. Spend a bit of time to add software support to control the fans appropriately. If you own a previous product I worked on with loud fans, I apologize. I really do.

There are lots of little details to take care of to finish a solid appliance offering. The application should be monitored by a watchdog (inittab, eventd) so when it goes down, it comes back up. Log file size and rotation should be set up. Cores should be constrained if runaway coring occurs. The application should start when the appliance is booted by default. It sounds obvious, but I’ve seen a few appliances that didn’t do this. Vendors should change /etc/rc.sysinit so that when fsck fails during boot, customers are instructed to call the vendor, or dropped into an emergency shell, rather than prompted for a root password. Vendors need to abstract configuration variables set by the users from their destination /etc files. It needs to be easy for the user to back-up, reset, and re-apply configuration settings.

Where Do We Go From Here?

Lots of applications are going to the cloud, which is fantastic. We’ll continue to see more of that. But we’ll still need to have software in our data centers. And it would be nice to see much of that software shipping as an appliance. I hate installing software applications on an OS for IT workloads. It’s stupid, it’s complex, and it’s largely unnecessary. Anyone who has installed a complex application knows how stupid it can be. Installing a TSM server package on a CentOS base is a quick 200 step process and the fact that even I could make it work means it was probably pretty easy.

Over time, we might find that some of the Cloud APIs are enabling compute resources with a different layer underneath than the traditional OS. A proper API underneath that extends onto physical and service offerings that can help standardize some of these appliance computing characteristics would be wonderful, but I don’t see how anyone can make money developing such a thing, so I am not optimistic that it will come to be.

So, remember, an appliance:

- Does one thing. It is focused and minimal.

- Is stripped down. There’s no bash shell for the user. The UI is focused, concise, web-based.

- Is lights-out. It recovers from failure. It is well behaved. It handles updates easily.

- Reduces burden on the user.

Vendors need to quit shipping software junk that they call an appliance. Customers need to demand more; it’s amazing how much bad software IT has to deal with. Stop adding to the pile.

Ship something good.

Many thanks to my colleagues and friends who reviewed this post before publishing.